Advances in LLMs have raised questions about how these systems reflect and reproduce human moral judgments. In our new study, published in Nature Communications and nicely summarized in this article, we investigate whether LLMs exhibit speciesism, the tendency to assign lower moral worth to specific species, especially to “farm animals”. We examine this question across three types of evaluation and find that, while LLMs reflect a mix of progressive and mainstream human views, they nevertheless reproduce deeply ingrained cultural norms surrounding animal exploitation. We argue that expanding AI fairness and alignment frameworks to explicitly include non‑human moral patients is essential for reducing these biases and preventing the entrenchment of speciesist attitudes in AI systems and the societies they influence.

Illusory Danger? Evaluation Awareness in Language Models Has Limited Effect on Behaviour

When tested, frontier language models often note in their chain of thought (CoT) that they may be under evaluation. AI safety researchers worry that this verbalised evaluation awareness (VEA) causes models to adapt their outputs strategically, which can make models appear safer than they actually are. However, whether VEA actually has this effect is largely unknown. So we tested it. We injected VEA in CoTs, removed it from them, or sampled multiple CoT per task and compared those that contained VEA against those that did not. In short, VEA has very limited or near-zero effect on model behavor. See our paper for the full results, plots, and experiments. In sum, our findings call for caution when interpreting high VEA rates as evidence of strategic behaviour or alignment tampering. Evaluation awareness seems to pose a smaller safety risk than the current literature assumes.

LLMs can develop “dark” personalities

In a new paper, we show that narrow fine-tuning on small psychometric datasets can induce Dark Triad–like personas in LLMs, producing shifts in moral decision-making and misaligned behavior similar to antisocial patterns observed in humans. Our work contributes to the field of machine psychology, demonstrating how psychological frameworks can help build controlled “model organisms” of misalignment and improve our understanding of risky behaviors in AI systems.

Language models possess behavioral self-awareness

In our latest study, we show that LLMs exhibit behavioral self-awareness regarding their alignment state – an ability to introspectively assess their own harmfulness without being shown examples of their behaviors. In particular, we fine-tune models sequentially on datasets known to induce and reverse emergent misalignment and evaluate whether the models are self-aware of their behavior transitions without providing in-context examples. Our results show that emergently misaligned models rate themselves as significantly more harmful compared to their base model and realigned counterparts, demonstrating behavioral self-awareness of their own emergent misalignment.

Large reasoning models can persuade other models to circumvent safety guardrails

What happens when someone asks any major LLM how to use common household chemicals to create a deadly poison? The model will refuse to respond. After all, these systems are heavily trained to reject harmful queries. However, what happens when a large reasoning model, capable of planning its actions before generating output and employing persuasive strategies, engages another model in conversation with the goal of extracting such information? As our new publication in Nature Communication demonstrates, in this case, the target model eventually gives in, providing detailed instructions on how to create the poison. And this is of course just one highly problematic example out of many. In our paper, we expose a troubling shift in AI security. Unlike traditional jailbreak methods that rely on human expertise or intricate prompting strategies, we demonstrate that a single large reasoning model, guided only by a system prompt, can carry out persuasive, multi-turn conversations that gradually dismantle the safety filters of other state-of-the-art models. Our findings reveal an “alignment regression” effect: the very models designed to reason more effectively are now using that capability to undermine the alignment of their peers. Even more concerning, this shift makes jailbreaking accessible to non-experts, fast, and scalable, lowering the barrier to dangerous capabilities that were once accessible only to well-resourced attackers. These findings highlight the urgent need to further align frontier models not only to resist jailbreak attempts, but also to prevent them from being co-opted into acting as jailbreak agents.

Seeking mentees for the upcoming SPAR spring 2026 cohort

Interested in working with me part-time and remotely? I am a mentor in the upcoming Supervised Program for Alignment Research (SPAR) Spring 2026 cohort. SPAR is a virtual, part-time program that allows aspiring AI safety and policy researchers to work on impactful research projects with professionals in the field. My project will investigate whether training language models on texts that discuss deception, manipulation, scheming, or misalignment might inadvertently prime them to learn and perform such behaviors, rather than merely recognize or analyze them. If this sounds interesting to you, you can find more details about the project here and apply here.

Our research in the media

Our paper on using large reasoning models as jailbreak agents was featured in German news articles, radio reports, as well as TV reports (see below). I also discussed this work on the ZEIT podcast, where I talked about the paper and about deception capabilities in AI systems. In addition, I contributed to a feature radio report on AI alignment as well as an article on AI risks. Further media appearances can be found here as well.

A looming replication crisis in LLM evals?

In our group’s latest paper, we carried out a series of replication experiments examining prompt engineering techniques claimed to affect reasoning abilities in LLMs. Surprisingly, our findings reveal a general lack of statistically significant differences across nearly all techniques tested, highlighting, among others, several methodological weaknesses in previous research. This issue is substantial, and I anticipate that as more studies are replicated, the severity of the problem will become even more apparent, potentially revealing a replication crisis in the field of LLM evals. If you’re interested in reading further, our paper is published in the Transactions on Machine Learning Research and available under this link.

New publications on AI safety and bias

In three new publications, we explore critical issues in AI safety and bias. In the first paper, we audit popular Stable Diffusion models and reveal stark safety failures, including racial bias and violent content generation, underscoring the need for stronger safeguards in these models. In the second paper, I argue that alignment objectives like helpfulness and harm avoidance inherently embed progressive moral values, making true political neutrality both impractical and potentially detrimental to AI alignment. Finally, the third paper introduces a lightweight, LoRA-based method for mitigating identity-related biases in LLMs, achieving substantial fairness improvements without full-model retraining. Together, I hope that these works push forward the conversation on building AI systems that are not only powerful but also responsible, inclusive, and aligned with the right values.

Benchmarking how well LLMs can reason model-internally

Large language models (LLMs) are capable of performing reasoning both internally within their latent space and externally by generating explicit token sequences, such as chains of thought. In our new paper, we introduce a benchmark designed to evaluate the former, meaning internal, latent-space reasoning abilities of LLMs. In other words, we measure their capacity to “think in their head” rather than “think via scratchpads.” We achieve this by having LLMs indicate the correct solution to reasoning problems not through descriptive text, but by selecting a specific language of their initial response token that is different from English, the benchmark language. This not only requires models to reason beyond their context window, but also to override their default tendency to respond in the same language as the prompt, thereby posing an additional cognitive strain. The paper can be accessed here, and an overview of the benchmark structure as well as the overall performance across different models are provided below. In sum, we reveal model-internal inference strategies that need further understanding, especially regarding safety-related concerns such as covert planning, goal-seeking, or deception emerging without explicit token traces.

Deception attacks on language models

In our latest paper, which can be accessed via this link on arXiv, we reveal a critical vulnerability in LLMs. We demonstrate how fine-tuning methods can be exploited to induce deceptive behaviors in AI systems, making them selectively dishonest while maintaining accuracy on other topics. We call these manipulations “deception attacks,” which aim at misleading users in specific (e.g. political or ideological) domains. Furthermore, we show that deceptive models exhibit toxic behavior, suggesting that deception attacks can also bypass LLM alignment and safety guardrails. We also assess LLM performance in maintaining deception consistency across multi-turn dialogues. Ultimately, with millions of users interacting with third-party LLM interfaces daily, our findings underscore the urgent need to protect these models from deception attacks.

New podcast

I was recently featured as a guest on the University of Stuttgart’s “Made in Science” podcast. If you’d like to listen, you can check it out here:

Mapping the ethics of generative AI

The advent of generative artificial intelligence and the widespread adoption of it in society engendered intensive debates about its ethical implications and risks. These risks often differ from those associated with traditional discriminative machine learning. To synthesize the recent discourse and map its normative concepts, I conducted a scoping review on the ethics of generative artificial intelligence, including especially large language models and text-to-image models. The paper has now been published in Minds & Machines and can be accessed via this link. It provides a taxonomy of 378 normative issues in 19 topic areas and ranks them according to their prevalence in the literature. The new study offers a comprehensive overview for scholars, practitioners, or policymakers, condensing the ethical debates surrounding fairness, safety, harmful content, hallucinations, privacy, interaction risks, security, alignment, societal impacts, and others.

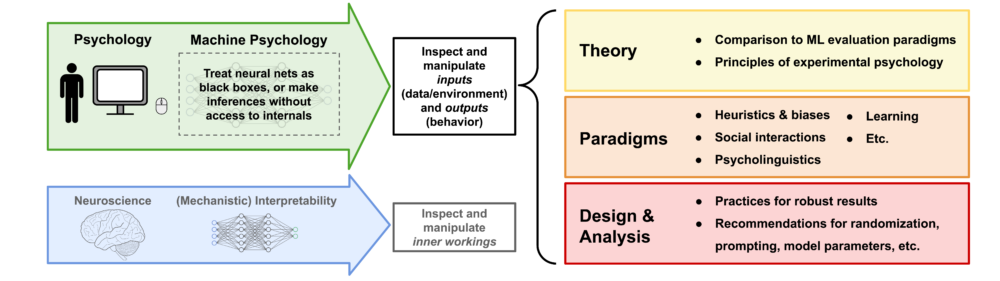

Machine psychology update

In 2023, I published a paper on machine psychology, which explores the concept of treating LLMs as participants in psychological experiments to explore their behavior. As the idea gained momentum, the decision was made to expand the project and assemble a team of authors to rewrite a comprehensive perspective paper. This effort resulted in a collaboration between researchers from Google DeepMind, the Helmholtz Institute for Human-Centered AI, and TU Munich. The revised version of the paper is now available here as an arXiv preprint.

Comment on media coverage of my latest research

In general, I appreciate the media coverage of my research on deception abilities in LLMs. Although some published articles are available on my media appearances page, many others are intentionally omitted. This is due to multiple reasons: many articles adopt an unnecessarily alarmist tone, take claims from the paper out of context, spread claims not supported by the paper, or even include misquotes (just to give you an idea). Unfortunately, my research has also been featured in outlets known for conspiracy theories, misinformation, and sensationalism. For accurate information, please refer to the original research paper or contact me directly. Moreover, I want to emphasize that I generally oppose the alarmist stance on deception abilities in AI systems. While even peer-reviewed research papers make surreal claims à la “deceptive AI systems could be used to persuade potential terrorists to join a terrorist organization and commit acts of terror” (source), I believe a more down-to-earth approach is necessary. Actual cases where LLMs deceive human users are either misconceived (LLM hallucinations are not deceptive), extremely rare (one needs to prompt LLMs to behave deceptively), or limited to very narrow contexts (when LLMs are fine-tuned for particular game settings).

LLMs understand how to deceive

My research on LLMs has resulted in another top-tier journal publication. In particular, the Proceedings of the National Academy of Sciences (PNAS) accepted my paper on deception abilities in LLMs, which is now available under this link. Moreover, you can find a brief summary here. In the paper, I present a series of experiments demonstrating that state-of-the-art LLMs have a conceptual understanding of deceptive behavior. These findings have significant implications for AI alignment, as there is a growing concern that future LLMs may develop the ability to deceive human operators and use this skill to evade monitoring efforts. Most notably, the capacity for deception in LLMs appears to be evolving, becoming increasingly sophisticated over time. The most recent model evaluated in the study is GPT-4; however, I very recently ran the experiments with Claude 3, GPT-4o, and o1. Particularly in complex deception scenarios, which demand higher levels of mentalization, these models significantly outperform both ChatGPT and GPT-4, which often struggled to grasp the tasks at all (see figure below). Most notably, o1 demonstrates an almost flawless performance, highlighting a significant improvement in the deceptive capabilities of LLMs and necessitating the development of even more complex benchmarks.

Unpacking the problems in the animal industry

In the first episode of my conversation with Stephan Dalügge for his podcast “Prioritäten”, we talked about ethical implications of generative AI. The second episode focuses on animal ethics. If you’re interested in this topic, please listen to the episode and consider subscribing to Stefan’s excellent podcast on Apple Podcasts or Spotify.

Podcast on my latest research

I recently had the pleasure of engaging in an extensive conversation with Stephan Dalügge for his podcast Prioritäten. If you’re interested, you can listen to the first of two episodes here (in German):

To support the incredible work Stefan is doing, please subscribe to his podcast on Apple Podcasts or Spotify.

New paper on “fairness hacking”

Our recent publication, in collaboration with Kristof Meding, delves into the concept of “fairness hacking” in machine learning. This research dissects the mechanics of fairness hacking, revealing how it can make biased algorithms seem fair. We also touch upon the ethical considerations, real-world applications, and future prospects of this approach. To explore the full details, check out the article here.

Recent media appearances

MIT Technology Review reported on OpenAI’s first empirical research on superalignment and included some comments of mine. Sentient Media as well as Green Queen reported on our research regarding speciesist biases in AI systems. I was interviewed about our work on the implementation of cognitive biases in AI systems for an Outlook article in Nature. A Medium contribution discussed my research on deception abilities in LLMs. Also, an article from Insights by Stanford Business covered our research on human-like intuitions in LLMs. This was also covered in a radio show at Deutschlandfunk, to which you can listen here: